/////////////////////////////////////// c%

claude zsh: command not found: claude ssh hatchery

cd infinity-topos

flox activate ╭────────────────────────────────────────────╮ │ ✻ Welcome to Claude Code research preview! │ │ │ │ /help for help │ │ │ │ cwd: /Users/barton/infinity-topos │ ╰────────────────────────────────────────────╯

✔ Found 27 MCP servers • /mcp ✔ Loaded project + user memory • /memory

/mcp ⎿ MCP Server Status ⎿ ⎿ • aider-mcp-server: connected ⎿ • anti-bullshit: connected ⎿ • apple-mcp: connected ⎿ • babashka: connected ⎿ • base-mcp: connected ⎿ • dialectical-coin: connected ⎿ • exa: connected ⎿ • firecrawl: connected ⎿ • github: connected ⎿ • github-mcp-server: failed ⎿ • kuzu: connected ⎿ • linear: connected ⎿ • manifold-markets: connected ⎿ • marginalia: connected ⎿ • mcpx: connected ⎿ • metaphor: connected ⎿ • motherduck: failed ⎿ • ngrok: connected ⎿ • omnimcp: failed ⎿ • paperproof-mcp: connected ⎿ • playwright: connected ⎿ • pulse: failed ⎿ • say: connected ⎿ • screenpipe: connected ⎿ • supabase: connected ⎿ • telegram: connected ⎿ • whatsapp: connected ⎿ ⎿ Run claude with --mcp-debug to see error logs inline, or view log files in: /Users/barton/Library/Caches/claude-cli-nodejs/-Users-barton-infinity-topos

Source: https://modelcontextprotocol.io/clients

A list of applications that support MCP integrations

This page provides an overview of applications that support the Model Context Protocol (MCP). Each client may support different MCP features, allowing for varying levels of integration with MCP servers.

| Client | Resources | Prompts | Tools | Sampling | Roots | Notes

|

| ------------------------------------ | ----------- | --------- | ------- | ---------- | ----- |

----------------------------------------------------------------------------------------------- |

| 5ire | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools.

|

| Apify MCP Tester | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools

|

| BeeAI Framework | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools in agentic workflows.

|

| Claude Code | ❌ | ✅ | ✅ | ❌ | ❌ | Supports prompts and tools

|

| Claude Desktop App | ✅ | ✅ | ✅ | ❌ | ❌ | Supports tools, prompts, and resources.

|

| Cline | ✅ | ❌ | ✅ | ❌ | ❌ | Supports tools and resources.

|

| Continue | ✅ | ✅ | ✅ | ❌ | ❌ | Supports tools, prompts, and resources.

|

| Copilot-MCP | ✅ | ❌ | ✅ | ❌ | ❌ | Supports tools and resources.

|

| Cursor | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools.

|

| Daydreams Agents | ✅ | ✅ | ✅ | ❌ | ❌ | Support for drop in Servers to Daydreams agents

|

| Emacs Mcp | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools in Emacs.

|

| fast-agent | ✅ | ✅ | ✅ | ✅ | ✅ | Full multimodal MCP support, with end-to-end tests

|

| Genkit |

|

| GenAIScript | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools.

|

| Goose | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools.

|

| LibreChat | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools for Agents

|

| mcp-agent | ❌ | ❌ | ✅ |

|

| OpenSumi | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools in OpenSumi

|

| oterm | ❌ | ✅ | ✅ | ❌ | ❌ | Supports tools and prompts.

|

| Roo Code | ✅ | ❌ | ✅ | ❌ | ❌ | Supports tools and resources.

|

| Sourcegraph Cody | ✅ | ❌ | ❌ | ❌ | ❌ | Supports resources through OpenCTX

|

| SpinAI | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools for Typescript AI Agents

|

| Superinterface | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools

|

| TheiaAI/TheiaIDE | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools for Agents in Theia AI and the AI-powered

Theia IDE |

| VS Code GitHub Copilot | ❌ | ❌ | ✅ | ❌ | ✅ | Supports dynamic tool/roots discovery, secure secret

configuration, and explicit tool prompting |

| Windsurf Editor | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools with AI Flow for collaborative

development. |

| Witsy | ❌ | ❌ | ✅ | ❌ | ❌ | Supports tools in Witsy.

|

| Zed | ❌ | ✅ | ❌ | ❌ | ❌ | Prompts appear as slash commands

|

5ire is an open source cross-platform desktop AI assistant that supports tools through MCP servers.

Key features:

- Built-in MCP servers can be quickly enabled and disabled.

- Users can add more servers by modifying the configuration file.

- It is open-source and user-friendly, suitable for beginners.

- Future support for MCP will be continuously improved.

Apify MCP Tester is an open-source client that connects to any MCP server using Server-Sent Events (SSE). It is a standalone Apify Actor designed for testing MCP servers over SSE, with support for Authorization headers. It uses plain JavaScript (old-school style) and is hosted on Apify, allowing you to run it without any setup.

Key features:

- Connects to any MCP server via SSE.

- Works with the Apify MCP Server to interact with one or more Apify Actors.

- Dynamically utilizes tools based on context and user queries (if supported by the server).

BeeAI Framework is an open-source framework for building, deploying, and serving powerful agentic workflows at scale. The framework includes the MCP Tool, a native feature that simplifies the integration of MCP servers into agentic workflows.

Key features:

- Seamlessly incorporate MCP tools into agentic workflows.

- Quickly instantiate framework-native tools from connected MCP client(s).

- Planned future support for agentic MCP capabilities.

Learn more:

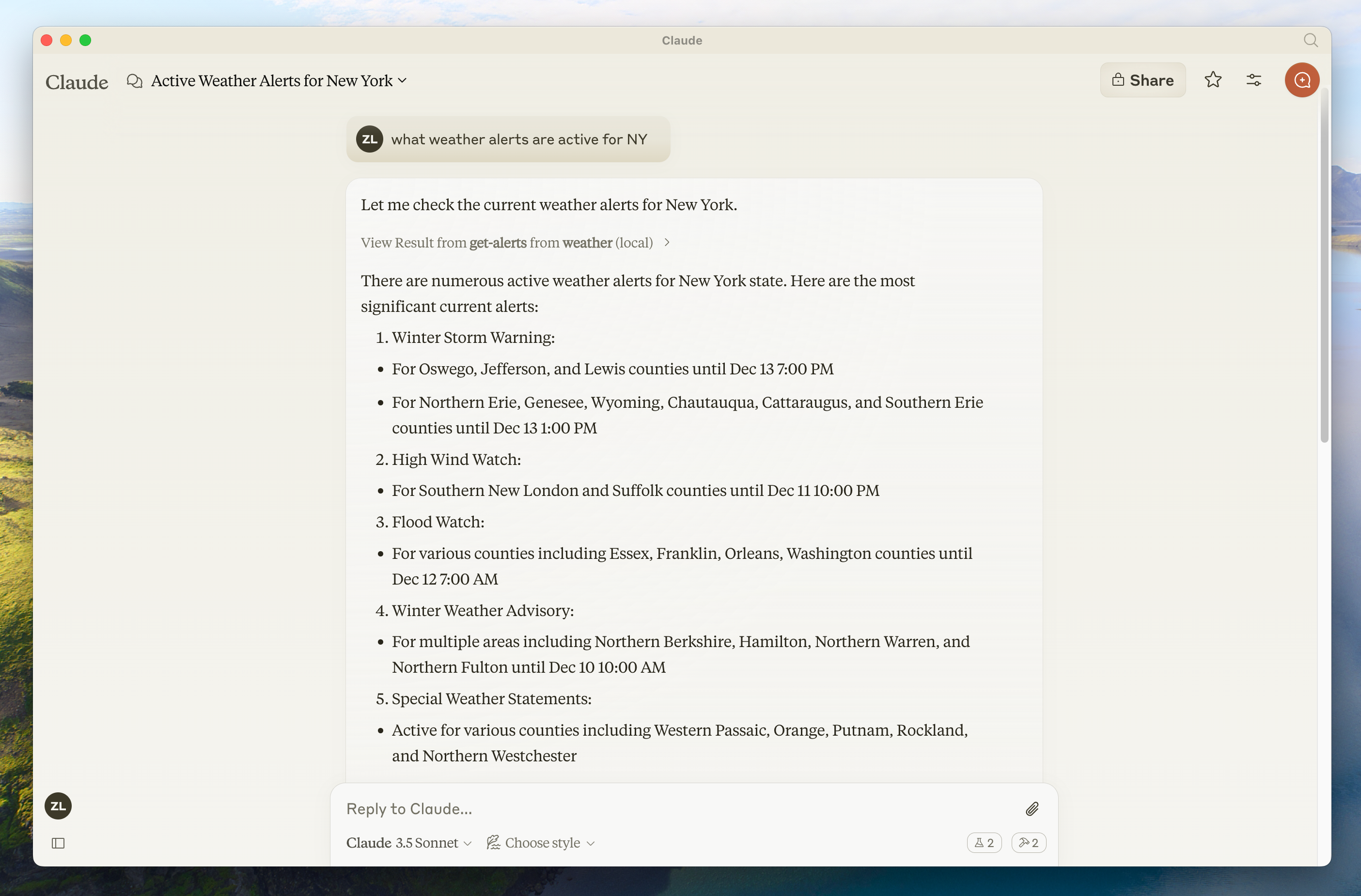

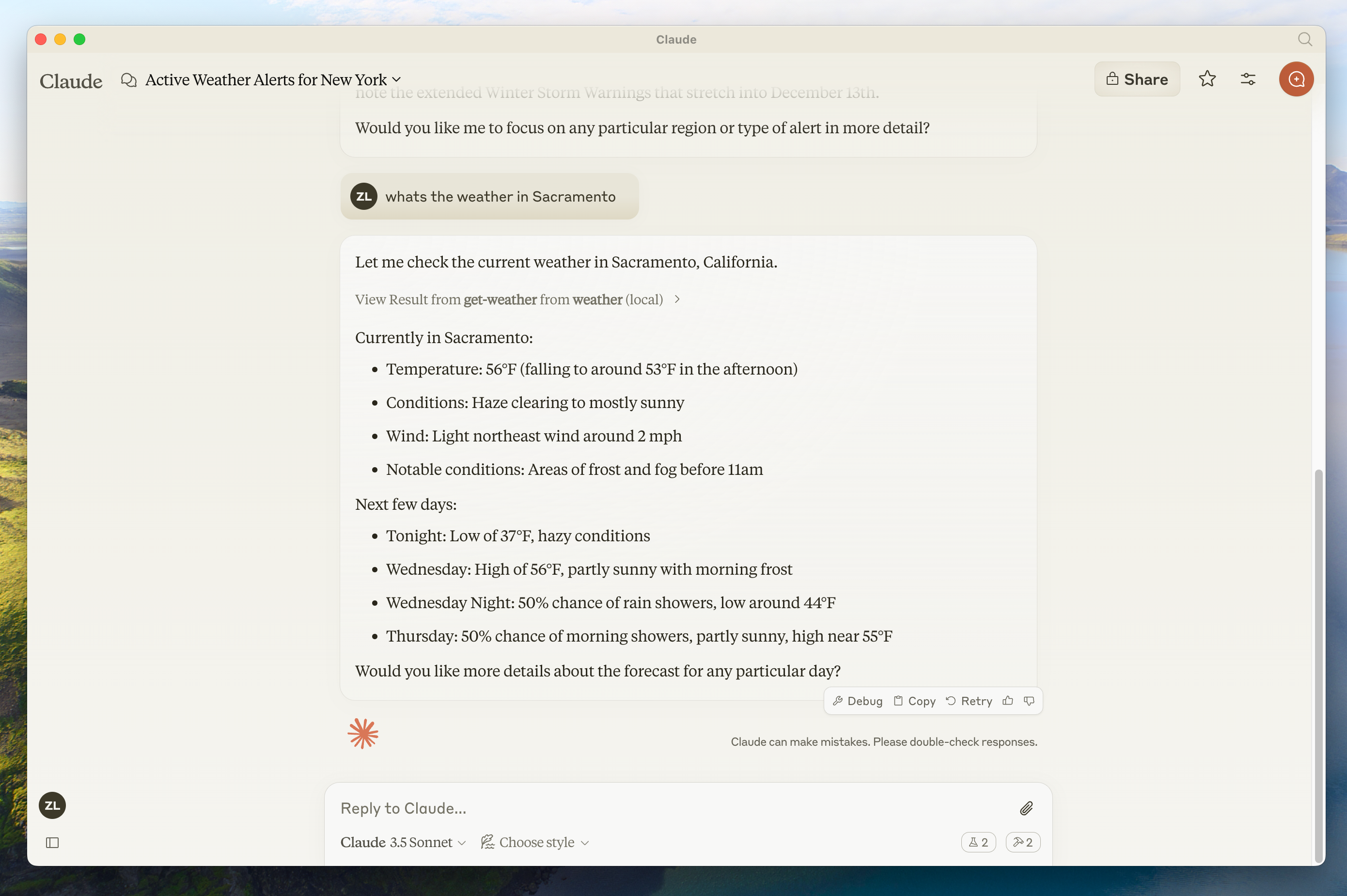

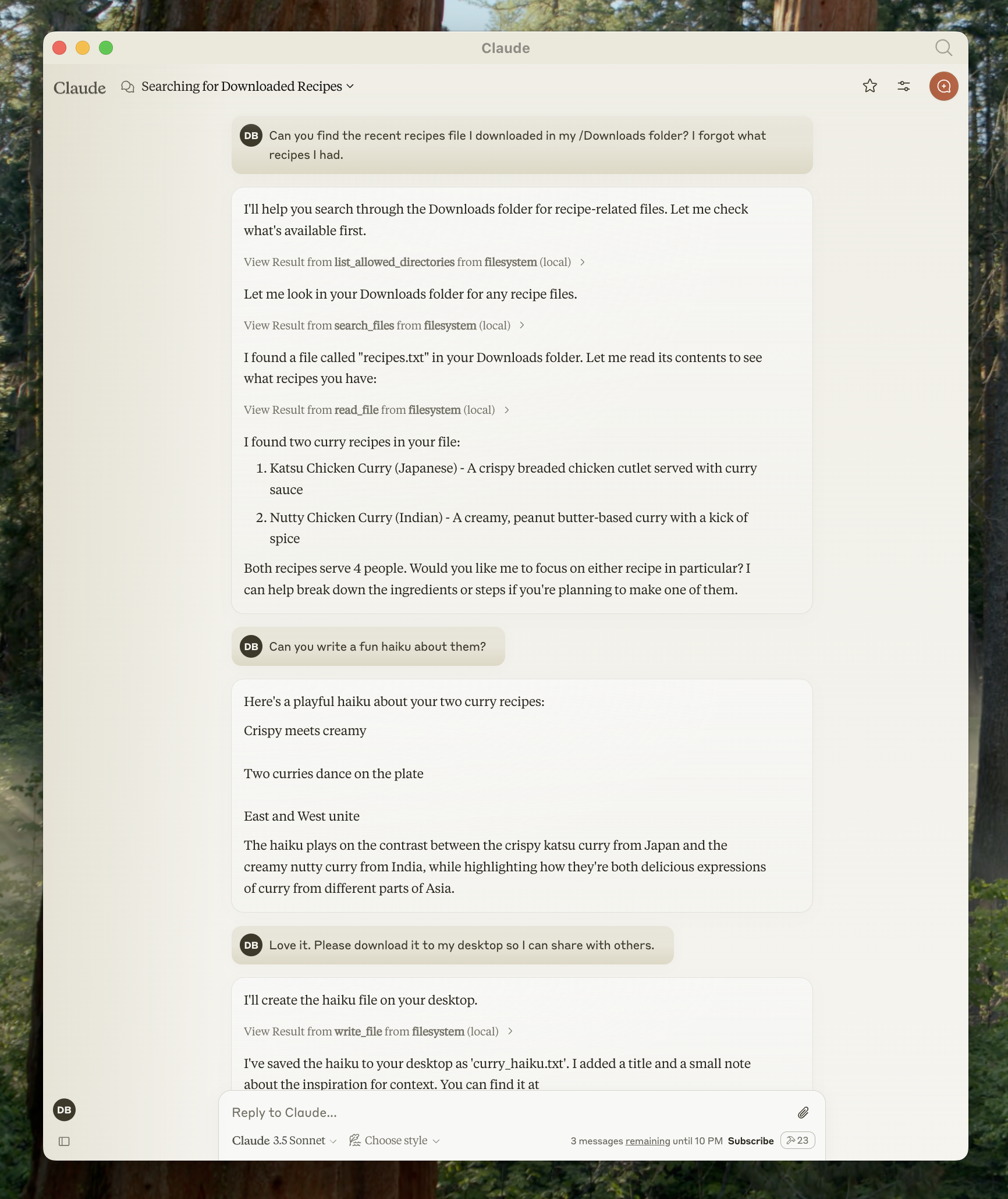

Claude Code is an interactive agentic coding tool from Anthropic that helps you code faster through natural language commands. It supports MCP integration for prompts and tools, and also functions as an MCP server to integrate with other clients.

Key features:

- Tool and prompt support for MCP servers

- Offers its own tools through an MCP server for integrating with other MCP clients

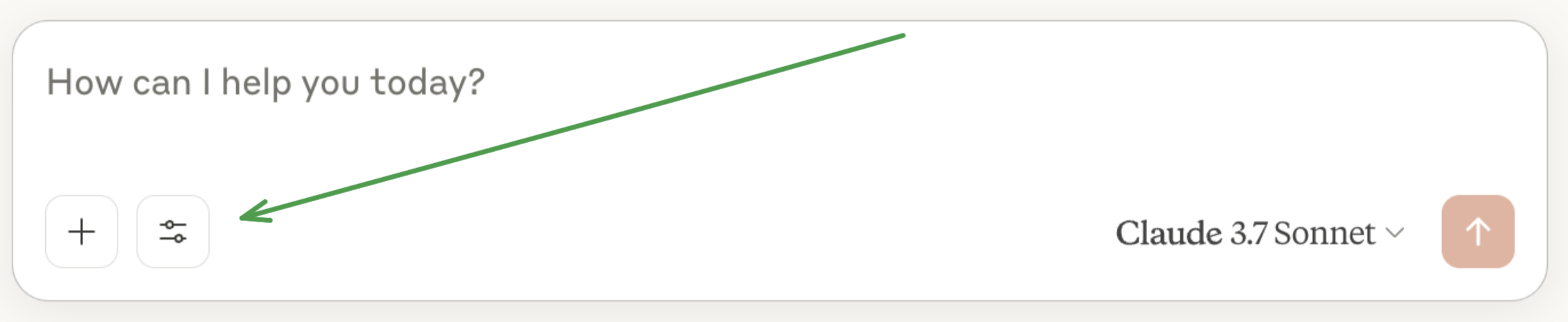

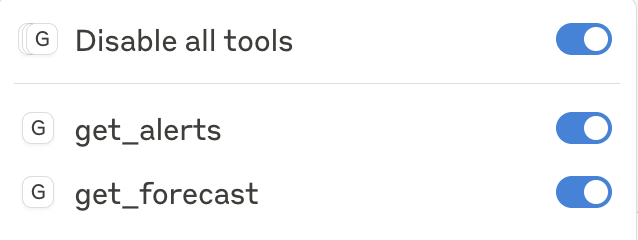

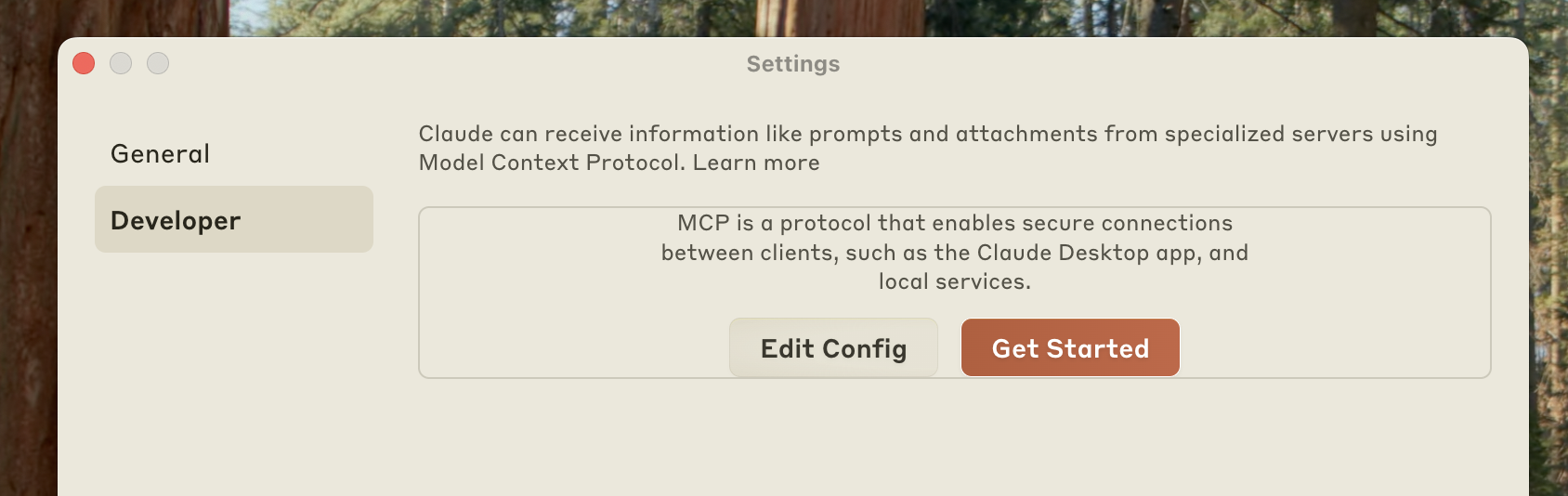

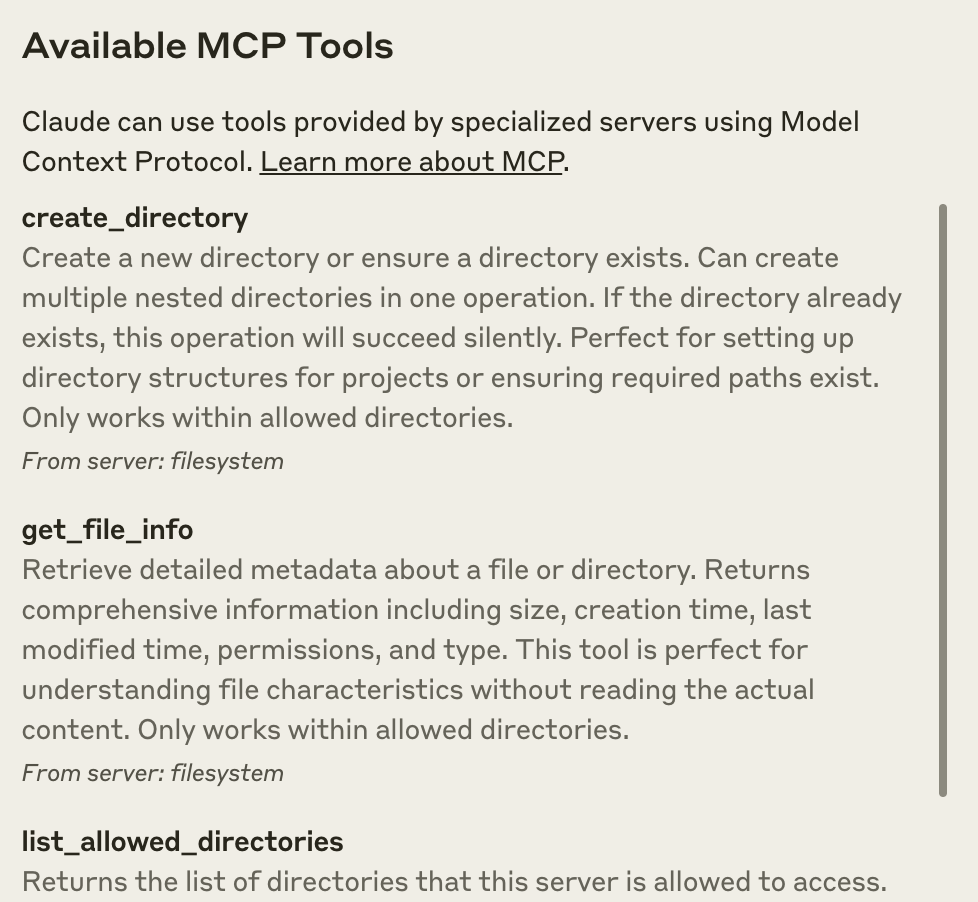

The Claude desktop application provides comprehensive support for MCP, enabling deep integration with local tools and data sources.

Key features:

- Full support for resources, allowing attachment of local files and data

- Support for prompt templates

- Tool integration for executing commands and scripts

- Local server connections for enhanced privacy and security

ⓘ Note: The Claude.ai web application does not currently support MCP. MCP features are only available in the desktop application.

Cline is an autonomous coding agent in VS Code that edits files, runs commands, uses a browser, and more–with your permission at each step.

Key features:

- Create and add tools through natural language (e.g. "add a tool that searches the web")

- Share custom MCP servers Cline creates with others via the

~/Documents/Cline/MCPdirectory - Displays configured MCP servers along with their tools, resources, and any error logs

Continue is an open-source AI code assistant, with built-in support for all MCP features.

Key features

- Type "@" to mention MCP resources

- Prompt templates surface as slash commands

- Use both built-in and MCP tools directly in chat

- Supports VS Code and JetBrains IDEs, with any LLM

Copilot-MCP enables AI coding assistance via MCP.

Key features:

- Support for MCP tools and resources

- Integration with development workflows

- Extensible AI capabilities

Cursor is an AI code editor.

Key Features:

- Support for MCP tools in Cursor Composer

- Support for both STDIO and SSE

Daydreams is a generative agent framework for executing anything onchain

Key features:

- Supports MCP Servers in config

- Exposes MCP Client

Emacs Mcp is an Emacs client designed to interface with MCP servers, enabling seamless connections and interactions. It provides MCP tool invocation support for AI plugins like gptel and llm, adhering to Emacs' standard tool invocation format. This integration enhances the functionality of AI tools within the Emacs ecosystem.

Key features:

- Provides MCP tool support for Emacs.

fast-agent is a Python Agent framework, with simple declarative support for creating Agents and Workflows, with full multi-modal support for Anthropic and OpenAI models.

Key features:

- PDF and Image support, based on MCP Native types

- Interactive front-end to develop and diagnose Agent applications, including passthrough and playback simulators

- Built in support for "Building Effective Agents" workflows.

- Deploy Agents as MCP Servers

Genkit is a cross-language SDK for building and integrating GenAI features into applications. The genkitx-mcp plugin enables consuming MCP servers as a client or creating MCP servers from Genkit tools and prompts.

Key features:

- Client support for tools and prompts (resources partially supported)

- Rich discovery with support in Genkit's Dev UI playground

- Seamless interoperability with Genkit's existing tools and prompts

- Works across a wide variety of GenAI models from top providers

Programmatically assemble prompts for LLMs using GenAIScript (in JavaScript). Orchestrate LLMs, tools, and data in JavaScript.

Key features:

- JavaScript toolbox to work with prompts

- Abstraction to make it easy and productive

- Seamless Visual Studio Code integration

Goose is an open source AI agent that supercharges your software development by automating coding tasks.

Key features:

- Expose MCP functionality to Goose through tools.

- MCPs can be installed directly via the extensions directory, CLI, or UI.

- Goose allows you to extend its functionality by building your own MCP servers.

- Includes built-in tools for development, web scraping, automation, memory, and integrations with JetBrains and Google Drive.

LibreChat is an open-source, customizable AI chat UI that supports multiple AI providers, now including MCP integration.

Key features:

- Extend current tool ecosystem, including Code Interpreter and Image generation tools, through MCP servers

- Add tools to customizable Agents, using a variety of LLMs from top providers

- Open-source and self-hostable, with secure multi-user support

- Future roadmap includes expanded MCP feature support

mcp-agent is a simple, composable framework to build agents using Model Context Protocol.

Key features:

- Automatic connection management of MCP servers.

- Expose tools from multiple servers to an LLM.

- Implements every pattern defined in Building Effective Agents.

- Supports workflow pause/resume signals, such as waiting for human feedback.

Microsoft Copilot Studio is a robust SaaS platform designed for building custom AI-driven applications and intelligent agents, empowering developers to create, deploy, and manage sophisticated AI solutions.

Key features:

- Support for MCP tools

- Extend Copilot Studio agents with MCP servers

- Leveraging Microsoft unified, governed, and secure API management solutions

OpenSumi is a framework helps you quickly build AI Native IDE products.

Key features:

- Supports MCP tools in OpenSumi

- Supports built-in IDE MCP servers and custom MCP servers

oterm is a terminal client for Ollama allowing users to create chats/agents.

Key features:

- Support for multiple fully customizable chat sessions with Ollama connected with tools.

- Support for MCP tools.

Roo Code enables AI coding assistance via MCP.

Key features:

- Support for MCP tools and resources

- Integration with development workflows

- Extensible AI capabilities

Cody is Sourcegraph's AI coding assistant, which implements MCP through OpenCTX.

Key features:

- Support for MCP resources

- Integration with Sourcegraph's code intelligence

- Uses OpenCTX as an abstraction layer

- Future support planned for additional MCP features

SpinAI is an open-source TypeScript framework for building observable AI agents. The framework provides native MCP compatibility, allowing agents to seamlessly integrate with MCP servers and tools.

Key features:

- Built-in MCP compatibility for AI agents

- Open-source TypeScript framework

- Observable agent architecture

- Native support for MCP tools integration

Superinterface is AI infrastructure and a developer platform to build in-app AI assistants with support for MCP, interactive components, client-side function calling and more.

Key features:

- Use tools from MCP servers in assistants embedded via React components or script tags

- SSE transport support

- Use any AI model from any AI provider (OpenAI, Anthropic, Ollama, others)

Theia AI is a framework for building AI-enhanced tools and IDEs. The AI-powered Theia IDE is an open and flexible development environment built on Theia AI.

Key features:

- Tool Integration: Theia AI enables AI agents, including those in the Theia IDE, to utilize MCP servers for seamless tool interaction.

- Customizable Prompts: The Theia IDE allows users to define and adapt prompts, dynamically integrating MCP servers for tailored workflows.

- Custom agents: The Theia IDE supports creating custom agents that leverage MCP capabilities, enabling users to design dedicated workflows on the fly.

Theia AI and Theia IDE's MCP integration provide users with flexibility, making them powerful platforms for exploring and adapting MCP.

Learn more:

VS Code integrates MCP with GitHub Copilot through agent mode, allowing direct interaction with MCP-provided tools within your agentic coding workflow. Configure servers in Claude Desktop, workspace or user settings, with guided MCP installation and secure handling of keys in input variables to avoid leaking hard-coded keys.

Key features:

- Support for stdio and server-sent events (SSE) transport

- Per-session selection of tools per agent session for optimal performance

- Easy server debugging with restart commands and output logging

- Tool calls with editable inputs and always-allow toggle

- Integration with existing VS Code extension system to register MCP servers from extensions

Windsurf Editor is an agentic IDE that combines AI assistance with developer workflows. It features an innovative AI Flow system that enables both collaborative and independent AI interactions while maintaining developer control.

Key features:

- Revolutionary AI Flow paradigm for human-AI collaboration

- Intelligent code generation and understanding

- Rich development tools with multi-model support

Witsy is an AI desktop assistant, supoorting Anthropic models and MCP servers as LLM tools.

Key features:

- Multiple MCP servers support

- Tool integration for executing commands and scripts

- Local server connections for enhanced privacy and security

- Easy-install from Smithery.ai

- Open-source, available for macOS, Windows and Linux

Zed is a high-performance code editor with built-in MCP support, focusing on prompt templates and tool integration.

Key features:

- Prompt templates surface as slash commands in the editor

- Tool integration for enhanced coding workflows

- Tight integration with editor features and workspace context

- Does not support MCP resources

If you've added MCP support to your application, we encourage you to submit a pull request to add it to this list. MCP integration can provide your users with powerful contextual AI capabilities and make your application part of the growing MCP ecosystem.

Benefits of adding MCP support:

- Enable users to bring their own context and tools

- Join a growing ecosystem of interoperable AI applications

- Provide users with flexible integration options

- Support local-first AI workflows

To get started with implementing MCP in your application, check out our Python or TypeScript SDK Documentation

This list is maintained by the community. If you notice any inaccuracies or would like to update information about MCP support in your application, please submit a pull request or open an issue in our documentation repository.

Source: https://modelcontextprotocol.io/development/contributing

How to participate in Model Context Protocol development

We welcome contributions from the community! Please review our contributing guidelines for details on how to submit changes.

All contributors must adhere to our Code of Conduct.

For questions and discussions, please use GitHub Discussions.

Source: https://modelcontextprotocol.io/development/roadmap

Our plans for evolving Model Context Protocol

Last updated: 2025-03-27

The Model Context Protocol is rapidly evolving. This page outlines our current thinking on key priorities and direction for approximately the next six months, though these may change significantly as the project develops. To see what's changed recently, check out the specification changelog.

The ideas presented here are not commitments—we may solve these challenges differently than described, or some may not materialize at all. This is also not an exhaustive list; we may incorporate work that isn't mentioned here.

We value community participation! Each section links to relevant discussions where you can learn more and contribute your thoughts.

For a technical view of our standardization process, visit the Standards Track on GitHub, which tracks how proposals progress toward inclusion in the official MCP specification.

To foster a robust developer ecosystem, we plan to invest in:

- Reference Client Implementations: demonstrating protocol features with high-quality AI applications

- Compliance Test Suites: automated verification that clients, servers, and SDKs properly implement the specification

These tools will help developers confidently implement MCP while ensuring consistent behavior across the ecosystem.

For MCP to reach its full potential, we need streamlined ways to distribute and discover MCP servers.

We plan to develop an MCP Registry that will enable centralized server discovery and metadata. This registry will primarily function as an API layer that third-party marketplaces and discovery services can build upon.

As MCP increasingly becomes part of agentic workflows, we're exploring improvements such as:

- Agent Graphs: enabling complex agent topologies through namespacing and graph-aware communication patterns

- Interactive Workflows: improving human-in-the-loop experiences with granular permissioning, standardized interaction patterns, and ways to directly communicate with the end user

Supporting the full spectrum of AI capabilities in MCP, including:

- Additional Modalities: video and other media types

- Streaming: multipart, chunked messages, and bidirectional communication for interactive experiences

We're implementing governance structures that prioritize:

- Community-Led Development: fostering a collaborative ecosystem where community members and AI developers can all participate in MCP's evolution, ensuring it serves diverse applications and use cases

- Transparent Standardization: establishing clear processes for contributing to the specification, while exploring formal standardization via industry bodies

We welcome your contributions to MCP's future! Join our GitHub Discussions to share ideas, provide feedback, or participate in the development process.

Source: https://modelcontextprotocol.io/development/updates

The latest updates and improvements to MCP

* Version [0.9.0](https://github.com/modelcontextprotocol/java-sdk/releases/tag/v0.9.0) of the MCP Java SDK has been released. * Refactored logging system to use exchange mechanism * Custom Context Paths * Server Instructions * CallToolResult Enhancement * Fix issues and cleanup API * Added binary compatibility tracking to avoid breaking changes * Drop jdk requirements to JDK8 * Added Claude Desktop integration with sample * The full changelog can be found here: [https://github.com/modelcontextprotocol/kotlin-sdk/releases/tag/0.4.0](https://github.com/modelcontextprotocol/kotlin-sdk/releases/tag/0.4.0) * Version [0.8.1](https://github.com/modelcontextprotocol/java-sdk/releases/tag/v0.8.1) of the MCP Java SDK has been released, providing important bug fixes. * We are exited to announce the availability of the MCP [C# SDK](https://github.com/modelcontextprotocol/csharp-sdk/) developed by [Peder Holdgaard Pedersen](http://github.com/PederHP) and Microsoft. This joins our growing list of supported languages. The C# SDK is also available as [NuGet package](https://www.nuget.org/packages/ModelContextProtocol) * Python SDK 1.5.0 was released with multiple fixes and improvements. * Version [0.8.0](https://github.com/modelcontextprotocol/java-sdk/releases/tag/v0.8.0) of the MCP Java SDK has been released, delivering important session management improvements and bug fixes. * Typescript SDK 1.7.0 was released with multiple fixes and improvements. * We're excited to announce that the Java SDK developed by Spring AI at VMware Tanzu is now the official [Java SDK](https://github.com/modelcontextprotocol/java-sdk) for MCP. This joins our existing Kotlin SDK in our growing list of supported languages. The Spring AI team will maintain the SDK as an integral part of the Model Context Protocol organization. We're thrilled to welcome them to the MCP community! * Version [1.2.1](https://github.com/modelcontextprotocol/python-sdk/releases/tag/v1.2.1) of the MCP Python SDK has been released, delivering important stability improvements and bug fixes. * Simplified, express-like API in the [TypeScript SDK](https://github.com/modelcontextprotocol/typescript-sdk) * Added 8 new clients to the [clients page](https://modelcontextprotocol.io/clients) * FastMCP API in the [Python SDK](https://github.com/modelcontextprotocol/python-sdk) * Dockerized MCP servers in the [servers repo](https://github.com/modelcontextprotocol/servers) * Jetbrains released a Kotlin SDK for MCP! * For a sample MCP Kotlin server, check out [this repository](https://github.com/modelcontextprotocol/kotlin-sdk/tree/main/samples/kotlin-mcp-server)Source: https://modelcontextprotocol.io/docs/concepts/architecture

Understand how MCP connects clients, servers, and LLMs

The Model Context Protocol (MCP) is built on a flexible, extensible architecture that enables seamless communication between LLM applications and integrations. This document covers the core architectural components and concepts.

MCP follows a client-server architecture where:

- Hosts are LLM applications (like Claude Desktop or IDEs) that initiate connections

- Clients maintain 1:1 connections with servers, inside the host application

- Servers provide context, tools, and prompts to clients

flowchart LR

subgraph "Host"

client1[MCP Client]

client2[MCP Client]

end

subgraph "Server Process"

server1[MCP Server]

end

subgraph "Server Process"

server2[MCP Server]

end

client1 <-->|Transport Layer| server1

client2 <-->|Transport Layer| server2

The protocol layer handles message framing, request/response linking, and high-level communication patterns.

```typescript class Protocol { // Handle incoming requests setRequestHandler(schema: T, handler: (request: T, extra: RequestHandlerExtra) => Promise): void // Handle incoming notifications

setNotificationHandler<T>(schema: T, handler: (notification: T) => Promise<void>): void

// Send requests and await responses

request<T>(request: Request, schema: T, options?: RequestOptions): Promise<T>

// Send one-way notifications

notification(notification: Notification): Promise<void>

}

```

</Tab>

<Tab title="Python">

```python

class Session(BaseSession[RequestT, NotificationT, ResultT]):

async def send_request(

self,

request: RequestT,

result_type: type[Result]

) -> Result:

"""Send request and wait for response. Raises McpError if response contains error."""

# Request handling implementation

async def send_notification(

self,

notification: NotificationT

) -> None:

"""Send one-way notification that doesn't expect response."""

# Notification handling implementation

async def _received_request(

self,

responder: RequestResponder[ReceiveRequestT, ResultT]

) -> None:

"""Handle incoming request from other side."""

# Request handling implementation

async def _received_notification(

self,

notification: ReceiveNotificationT

) -> None:

"""Handle incoming notification from other side."""

# Notification handling implementation

```

</Tab>

Key classes include:

ProtocolClientServer

The transport layer handles the actual communication between clients and servers. MCP supports multiple transport mechanisms:

-

Stdio transport

- Uses standard input/output for communication

- Ideal for local processes

-

HTTP with SSE transport

- Uses Server-Sent Events for server-to-client messages

- HTTP POST for client-to-server messages

All transports use JSON-RPC 2.0 to exchange messages. See the specification for detailed information about the Model Context Protocol message format.

MCP has these main types of messages:

-

Requests expect a response from the other side:

interface Request { method: string; params?: { ... }; }

-

Results are successful responses to requests:

interface Result { [key: string]: unknown; }

-

Errors indicate that a request failed:

interface Error { code: number; message: string; data?: unknown; }

-

Notifications are one-way messages that don't expect a response:

interface Notification { method: string; params?: { ... }; }

sequenceDiagram

participant Client

participant Server

Client->>Server: initialize request

Server->>Client: initialize response

Client->>Server: initialized notification

Note over Client,Server: Connection ready for use

- Client sends

initializerequest with protocol version and capabilities - Server responds with its protocol version and capabilities

- Client sends

initializednotification as acknowledgment - Normal message exchange begins

After initialization, the following patterns are supported:

- Request-Response: Client or server sends requests, the other responds

- Notifications: Either party sends one-way messages

Either party can terminate the connection:

- Clean shutdown via

close() - Transport disconnection

- Error conditions

MCP defines these standard error codes:

enum ErrorCode {

// Standard JSON-RPC error codes

ParseError = -32700,

InvalidRequest = -32600,

MethodNotFound = -32601,

InvalidParams = -32602,

InternalError = -32603

}SDKs and applications can define their own error codes above -32000.

Errors are propagated through:

- Error responses to requests

- Error events on transports

- Protocol-level error handlers

Here's a basic example of implementing an MCP server:

```typescript import { Server } from "@modelcontextprotocol/sdk/server/index.js"; import { StdioServerTransport } from "@modelcontextprotocol/sdk/server/stdio.js"; const server = new Server({

name: "example-server",

version: "1.0.0"

}, {

capabilities: {

resources: {}

}

});

// Handle requests

server.setRequestHandler(ListResourcesRequestSchema, async () => {

return {

resources: [

{

uri: "example://resource",

name: "Example Resource"

}

]

};

});

// Connect transport

const transport = new StdioServerTransport();

await server.connect(transport);

```

</Tab>

<Tab title="Python">

```python

import asyncio

import mcp.types as types

from mcp.server import Server

from mcp.server.stdio import stdio_server

app = Server("example-server")

@app.list_resources()

async def list_resources() -> list[types.Resource]:

return [

types.Resource(

uri="example://resource",

name="Example Resource"

)

]

async def main():

async with stdio_server() as streams:

await app.run(

streams[0],

streams[1],

app.create_initialization_options()

)

if __name__ == "__main__":

asyncio.run(main())

```

</Tab>

-

Local communication

- Use stdio transport for local processes

- Efficient for same-machine communication

- Simple process management

-

Remote communication

- Use SSE for scenarios requiring HTTP compatibility

- Consider security implications including authentication and authorization

-

Request processing

- Validate inputs thoroughly

- Use type-safe schemas

- Handle errors gracefully

- Implement timeouts

-

Progress reporting

- Use progress tokens for long operations

- Report progress incrementally

- Include total progress when known

-

Error management

- Use appropriate error codes

- Include helpful error messages

- Clean up resources on errors

-

Transport security

- Use TLS for remote connections

- Validate connection origins

- Implement authentication when needed

-

Message validation

- Validate all incoming messages

- Sanitize inputs

- Check message size limits

- Verify JSON-RPC format

-

Resource protection

- Implement access controls

- Validate resource paths

- Monitor resource usage

- Rate limit requests

-

Error handling

- Don't leak sensitive information

- Log security-relevant errors

- Implement proper cleanup

- Handle DoS scenarios

-

Logging

- Log protocol events

- Track message flow

- Monitor performance

- Record errors

-

Diagnostics

- Implement health checks

- Monitor connection state

- Track resource usage

- Profile performance

-

Testing

- Test different transports

- Verify error handling

- Check edge cases

- Load test servers

Source: https://modelcontextprotocol.io/docs/concepts/prompts

Create reusable prompt templates and workflows

Prompts enable servers to define reusable prompt templates and workflows that clients can easily surface to users and LLMs. They provide a powerful way to standardize and share common LLM interactions.

Prompts are designed to be **user-controlled**, meaning they are exposed from servers to clients with the intention of the user being able to explicitly select them for use.Prompts in MCP are predefined templates that can:

- Accept dynamic arguments

- Include context from resources

- Chain multiple interactions

- Guide specific workflows

- Surface as UI elements (like slash commands)

Each prompt is defined with:

{

name: string; // Unique identifier for the prompt

description?: string; // Human-readable description

arguments?: [ // Optional list of arguments

{

name: string; // Argument identifier

description?: string; // Argument description

required?: boolean; // Whether argument is required

}

]

}Clients can discover available prompts through the prompts/list endpoint:

// Request

{

method: "prompts/list"

}

// Response

{

prompts: [

{

name: "analyze-code",

description: "Analyze code for potential improvements",

arguments: [

{

name: "language",

description: "Programming language",

required: true

}

]

}

]

}To use a prompt, clients make a prompts/get request:

// Request

{

method: "prompts/get",

params: {

name: "analyze-code",

arguments: {

language: "python"

}

}

}

// Response

{

description: "Analyze Python code for potential improvements",

messages: [

{

role: "user",

content: {

type: "text",

text: "Please analyze the following Python code for potential improvements:\n\n```python\ndef calculate_sum(numbers):\n total = 0\n for num in

numbers:\n total = total + num\n return total\n\nresult = calculate_sum([1, 2, 3, 4, 5])\nprint(result)\n```"

}

}

]

}Prompts can be dynamic and include:

{

"name": "analyze-project",

"description": "Analyze project logs and code",

"arguments": [

{

"name": "timeframe",

"description": "Time period to analyze logs",

"required": true

},

{

"name": "fileUri",

"description": "URI of code file to review",

"required": true

}

]

}When handling the prompts/get request:

{

"messages": [

{

"role": "user",

"content": {

"type": "text",

"text": "Analyze these system logs and the code file for any issues:"

}

},

{

"role": "user",

"content": {

"type": "resource",

"resource": {

"uri": "logs://recent?timeframe=1h",

"text": "[2024-03-14 15:32:11] ERROR: Connection timeout in network.py:127\n[2024-03-14 15:32:15] WARN: Retrying connection (attempt 2/3)\n[2024-03-14

15:32:20] ERROR: Max retries exceeded",

"mimeType": "text/plain"

}

}

},

{

"role": "user",

"content": {

"type": "resource",

"resource": {

"uri": "file:///path/to/code.py",

"text": "def connect_to_service(timeout=30):\n retries = 3\n for attempt in range(retries):\n try:\n return

establish_connection(timeout)\n except TimeoutError:\n if attempt == retries - 1:\n raise\n time.sleep(5)\n\ndef

establish_connection(timeout):\n # Connection implementation\n pass",

"mimeType": "text/x-python"

}

}

}

]

}const debugWorkflow = {

name: "debug-error",

async getMessages(error: string) {

return [

{

role: "user",

content: {

type: "text",

text: `Here's an error I'm seeing: ${error}`

}

},

{

role: "assistant",

content: {

type: "text",

text: "I'll help analyze this error. What have you tried so far?"

}

},

{

role: "user",

content: {

type: "text",

text: "I've tried restarting the service, but the error persists."

}

}

];

}

};Here's a complete example of implementing prompts in an MCP server:

```typescript import { Server } from "@modelcontextprotocol/sdk/server"; import { ListPromptsRequestSchema, GetPromptRequestSchema } from "@modelcontextprotocol/sdk/types"; const PROMPTS = {

"git-commit": {

name: "git-commit",

description: "Generate a Git commit message",

arguments: [

{

name: "changes",

description: "Git diff or description of changes",

required: true

}

]

},

"explain-code": {

name: "explain-code",

description: "Explain how code works",

arguments: [

{

name: "code",

description: "Code to explain",

required: true

},

{

name: "language",

description: "Programming language",

required: false

}

]

}

};

const server = new Server({

name: "example-prompts-server",

version: "1.0.0"

}, {

capabilities: {

prompts: {}

}

});

// List available prompts

server.setRequestHandler(ListPromptsRequestSchema, async () => {

return {

prompts: Object.values(PROMPTS)

};

});

// Get specific prompt

server.setRequestHandler(GetPromptRequestSchema, async (request) => {

const prompt = PROMPTS[request.params.name];

if (!prompt) {

throw new Error(`Prompt not found: ${request.params.name}`);

}

if (request.params.name === "git-commit") {

return {

messages: [

{

role: "user",

content: {

type: "text",

text: `Generate a concise but descriptive commit message for these changes:\n\n${request.params.arguments?.changes}`

}

}

]

};

}

if (request.params.name === "explain-code") {

const language = request.params.arguments?.language || "Unknown";

return {

messages: [

{

role: "user",

content: {

type: "text",

text: `Explain how this ${language} code works:\n\n${request.params.arguments?.code}`

}

}

]

};

}

throw new Error("Prompt implementation not found");

});

```

</Tab>

<Tab title="Python">

```python

from mcp.server import Server

import mcp.types as types

# Define available prompts

PROMPTS = {

"git-commit": types.Prompt(

name="git-commit",

description="Generate a Git commit message",

arguments=[

types.PromptArgument(

name="changes",

description="Git diff or description of changes",

required=True

)

],

),

"explain-code": types.Prompt(

name="explain-code",

description="Explain how code works",

arguments=[

types.PromptArgument(

name="code",

description="Code to explain",

required=True

),

types.PromptArgument(

name="language",

description="Programming language",

required=False

)

],

)

}

# Initialize server

app = Server("example-prompts-server")

@app.list_prompts()

async def list_prompts() -> list[types.Prompt]:

return list(PROMPTS.values())

@app.get_prompt()

async def get_prompt(

name: str, arguments: dict[str, str] | None = None

) -> types.GetPromptResult:

if name not in PROMPTS:

raise ValueError(f"Prompt not found: {name}")

if name == "git-commit":

changes = arguments.get("changes") if arguments else ""

return types.GetPromptResult(

messages=[

types.PromptMessage(

role="user",

content=types.TextContent(

type="text",

text=f"Generate a concise but descriptive commit message "

f"for these changes:\n\n{changes}"

)

)

]

)

if name == "explain-code":

code = arguments.get("code") if arguments else ""

language = arguments.get("language", "Unknown") if arguments else "Unknown"

return types.GetPromptResult(

messages=[

types.PromptMessage(

role="user",

content=types.TextContent(

type="text",

text=f"Explain how this {language} code works:\n\n{code}"

)

)

]

)

raise ValueError("Prompt implementation not found")

```

</Tab>

When implementing prompts:

- Use clear, descriptive prompt names

- Provide detailed descriptions for prompts and arguments

- Validate all required arguments

- Handle missing arguments gracefully

- Consider versioning for prompt templates

- Cache dynamic content when appropriate

- Implement error handling

- Document expected argument formats

- Consider prompt composability

- Test prompts with various inputs

Prompts can be surfaced in client UIs as:

- Slash commands

- Quick actions

- Context menu items

- Command palette entries

- Guided workflows

- Interactive forms

Servers can notify clients about prompt changes:

- Server capability:

prompts.listChanged - Notification:

notifications/prompts/list_changed - Client re-fetches prompt list

When implementing prompts:

- Validate all arguments

- Sanitize user input

- Consider rate limiting

- Implement access controls

- Audit prompt usage

- Handle sensitive data appropriately

- Validate generated content

- Implement timeouts

- Consider prompt injection risks

- Document security requirements

Source: https://modelcontextprotocol.io/docs/concepts/resources

Expose data and content from your servers to LLMs

Resources are a core primitive in the Model Context Protocol (MCP) that allow servers to expose data and content that can be read by clients and used as context for LLM interactions.

Resources are designed to be **application-controlled**, meaning that the client application can decide how and when they should be used. Different MCP clients may handle resources differently. For example:* Claude Desktop currently requires users to explicitly select resources before they can be used

* Other clients might automatically select resources based on heuristics

* Some implementations may even allow the AI model itself to determine which resources to use

Server authors should be prepared to handle any of these interaction patterns when implementing resource support. In order to expose data to models

automatically, server authors should use a model-controlled primitive such as Tools.

Resources represent any kind of data that an MCP server wants to make available to clients. This can include:

- File contents

- Database records

- API responses

- Live system data

- Screenshots and images

- Log files

- And more

Each resource is identified by a unique URI and can contain either text or binary data.

Resources are identified using URIs that follow this format:

[protocol]://[host]/[path]

For example:

file:///home/user/documents/report.pdfpostgres://database/customers/schemascreen://localhost/display1

The protocol and path structure is defined by the MCP server implementation. Servers can define their own custom URI schemes.

Resources can contain two types of content:

Text resources contain UTF-8 encoded text data. These are suitable for:

- Source code

- Configuration files

- Log files

- JSON/XML data

- Plain text

Binary resources contain raw binary data encoded in base64. These are suitable for:

- Images

- PDFs

- Audio files

- Video files

- Other non-text formats

Clients can discover available resources through two main methods:

Servers expose a list of concrete resources via the resources/list endpoint. Each resource includes:

{

uri: string; // Unique identifier for the resource

name: string; // Human-readable name

description?: string; // Optional description

mimeType?: string; // Optional MIME type

}For dynamic resources, servers can expose URI templates that clients can use to construct valid resource URIs:

{

uriTemplate: string; // URI template following RFC 6570

name: string; // Human-readable name for this type

description?: string; // Optional description

mimeType?: string; // Optional MIME type for all matching resources

}To read a resource, clients make a resources/read request with the resource URI.

The server responds with a list of resource contents:

{

contents: [

{

uri: string; // The URI of the resource

mimeType?: string; // Optional MIME type

// One of:

text?: string; // For text resources

blob?: string; // For binary resources (base64 encoded)

}

]

}MCP supports real-time updates for resources through two mechanisms:

Servers can notify clients when their list of available resources changes via the notifications/resources/list_changed notification.

Clients can subscribe to updates for specific resources:

- Client sends

resources/subscribewith resource URI - Server sends

notifications/resources/updatedwhen the resource changes - Client can fetch latest content with

resources/read - Client can unsubscribe with

resources/unsubscribe

Here's a simple example of implementing resource support in an MCP server:

```typescript const server = new Server({ name: "example-server", version: "1.0.0" }, { capabilities: { resources: {} } }); // List available resources

server.setRequestHandler(ListResourcesRequestSchema, async () => {

return {

resources: [

{

uri: "file:///logs/app.log",

name: "Application Logs",

mimeType: "text/plain"

}

]

};

});

// Read resource contents

server.setRequestHandler(ReadResourceRequestSchema, async (request) => {

const uri = request.params.uri;

if (uri === "file:///logs/app.log") {

const logContents = await readLogFile();

return {

contents: [

{

uri,

mimeType: "text/plain",

text: logContents

}

]

};

}

throw new Error("Resource not found");

});

```

</Tab>

<Tab title="Python">

```python

app = Server("example-server")

@app.list_resources()

async def list_resources() -> list[types.Resource]:

return [

types.Resource(

uri="file:///logs/app.log",

name="Application Logs",

mimeType="text/plain"

)

]

@app.read_resource()

async def read_resource(uri: AnyUrl) -> str:

if str(uri) == "file:///logs/app.log":

log_contents = await read_log_file()

return log_contents

raise ValueError("Resource not found")

# Start server

async with stdio_server() as streams:

await app.run(

streams[0],

streams[1],

app.create_initialization_options()

)

```

</Tab>

When implementing resource support:

- Use clear, descriptive resource names and URIs

- Include helpful descriptions to guide LLM understanding

- Set appropriate MIME types when known

- Implement resource templates for dynamic content

- Use subscriptions for frequently changing resources

- Handle errors gracefully with clear error messages

- Consider pagination for large resource lists

- Cache resource contents when appropriate

- Validate URIs before processing

- Document your custom URI schemes

When exposing resources:

- Validate all resource URIs

- Implement appropriate access controls

- Sanitize file paths to prevent directory traversal

- Be cautious with binary data handling

- Consider rate limiting for resource reads

- Audit resource access

- Encrypt sensitive data in transit

- Validate MIME types

- Implement timeouts for long-running reads

- Handle resource cleanup appropriately

Source: https://modelcontextprotocol.io/docs/concepts/roots

Understanding roots in MCP

Roots are a concept in MCP that define the boundaries where servers can operate. They provide a way for clients to inform servers about relevant resources and their locations.

A root is a URI that a client suggests a server should focus on. When a client connects to a server, it declares which roots the server should work with. While primarily used for filesystem paths, roots can be any valid URI including HTTP URLs.

For example, roots could be:

file:///home/user/projects/myapp

https://api.example.com/v1

Roots serve several important purposes:

- Guidance: They inform servers about relevant resources and locations

- Clarity: Roots make it clear which resources are part of your workspace

- Organization: Multiple roots let you work with different resources simultaneously

When a client supports roots, it:

- Declares the

rootscapability during connection - Provides a list of suggested roots to the server

- Notifies the server when roots change (if supported)

While roots are informational and not strictly enforcing, servers should:

- Respect the provided roots

- Use root URIs to locate and access resources

- Prioritize operations within root boundaries

Roots are commonly used to define:

- Project directories

- Repository locations

- API endpoints

- Configuration locations

- Resource boundaries

When working with roots:

- Only suggest necessary resources

- Use clear, descriptive names for roots

- Monitor root accessibility

- Handle root changes gracefully

Here's how a typical MCP client might expose roots:

{

"roots": [

{

"uri": "file:///home/user/projects/frontend",

"name": "Frontend Repository"

},

{

"uri": "https://api.example.com/v1",

"name": "API Endpoint"

}

]

}This configuration suggests the server focus on both a local repository and an API endpoint while keeping them logically separated.

Source: https://modelcontextprotocol.io/docs/concepts/sampling

Let your servers request completions from LLMs

Sampling is a powerful MCP feature that allows servers to request LLM completions through the client, enabling sophisticated agentic behaviors while maintaining security and privacy.

This feature of MCP is not yet supported in the Claude Desktop client.The sampling flow follows these steps:

- Server sends a

sampling/createMessagerequest to the client - Client reviews the request and can modify it

- Client samples from an LLM

- Client reviews the completion

- Client returns the result to the server

This human-in-the-loop design ensures users maintain control over what the LLM sees and generates.

Sampling requests use a standardized message format:

{

messages: [

{

role: "user" | "assistant",

content: {

type: "text" | "image",

// For text:

text?: string,

// For images:

data?: string, // base64 encoded

mimeType?: string

}

}

],

modelPreferences?: {

hints?: [{

name?: string // Suggested model name/family

}],

costPriority?: number, // 0-1, importance of minimizing cost

speedPriority?: number, // 0-1, importance of low latency

intelligencePriority?: number // 0-1, importance of capabilities

},

systemPrompt?: string,

includeContext?: "none" | "thisServer" | "allServers",

temperature?: number,

maxTokens: number,

stopSequences?: string[],

metadata?: Record<string, unknown>

}The messages array contains the conversation history to send to the LLM. Each message has:

role: Either "user" or "assistant"content: The message content, which can be:- Text content with a

textfield - Image content with

data(base64) andmimeTypefields

- Text content with a

The modelPreferences object allows servers to specify their model selection preferences:

-

hints: Array of model name suggestions that clients can use to select an appropriate model:name: String that can match full or partial model names (e.g. "claude-3", "sonnet")- Clients may map hints to equivalent models from different providers

- Multiple hints are evaluated in preference order

-

Priority values (0-1 normalized):

costPriority: Importance of minimizing costsspeedPriority: Importance of low latency responseintelligencePriority: Importance of advanced model capabilities

Clients make the final model selection based on these preferences and their available models.

An optional systemPrompt field allows servers to request a specific system prompt. The client may modify or ignore this.

The includeContext parameter specifies what MCP context to include:

"none": No additional context"thisServer": Include context from the requesting server"allServers": Include context from all connected MCP servers

The client controls what context is actually included.

Fine-tune the LLM sampling with:

temperature: Controls randomness (0.0 to 1.0)maxTokens: Maximum tokens to generatestopSequences: Array of sequences that stop generationmetadata: Additional provider-specific parameters

The client returns a completion result:

{

model: string, // Name of the model used

stopReason?: "endTurn" | "stopSequence" | "maxTokens" | string,

role: "user" | "assistant",

content: {

type: "text" | "image",

text?: string,

data?: string,

mimeType?: string

}

}Here's an example of requesting sampling from a client:

{

"method": "sampling/createMessage",

"params": {

"messages": [

{

"role": "user",

"content": {

"type": "text",

"text": "What files are in the current directory?"

}

}

],

"systemPrompt": "You are a helpful file system assistant.",

"includeContext": "thisServer",

"maxTokens": 100

}

}When implementing sampling:

- Always provide clear, well-structured prompts

- Handle both text and image content appropriately

- Set reasonable token limits

- Include relevant context through

includeContext - Validate responses before using them

- Handle errors gracefully

- Consider rate limiting sampling requests

- Document expected sampling behavior

- Test with various model parameters

- Monitor sampling costs

Sampling is designed with human oversight in mind:

- Clients should show users the proposed prompt

- Users should be able to modify or reject prompts

- System prompts can be filtered or modified

- Context inclusion is controlled by the client

- Clients should show users the completion

- Users should be able to modify or reject completions

- Clients can filter or modify completions

- Users control which model is used

When implementing sampling:

- Validate all message content

- Sanitize sensitive information

- Implement appropriate rate limits

- Monitor sampling usage

- Encrypt data in transit

- Handle user data privacy

- Audit sampling requests

- Control cost exposure

- Implement timeouts

- Handle model errors gracefully

Sampling enables agentic patterns like:

- Reading and analyzing resources

- Making decisions based on context

- Generating structured data

- Handling multi-step tasks

- Providing interactive assistance

Best practices for context:

- Request minimal necessary context

- Structure context clearly

- Handle context size limits

- Update context as needed

- Clean up stale context

Robust error handling should:

- Catch sampling failures

- Handle timeout errors

- Manage rate limits

- Validate responses

- Provide fallback behaviors

- Log errors appropriately

Be aware of these limitations:

- Sampling depends on client capabilities

- Users control sampling behavior

- Context size has limits

- Rate limits may apply

- Costs should be considered

- Model availability varies

- Response times vary

- Not all content types supported

Source: https://modelcontextprotocol.io/docs/concepts/tools

Enable LLMs to perform actions through your server

Tools are a powerful primitive in the Model Context Protocol (MCP) that enable servers to expose executable functionality to clients. Through tools, LLMs can interact with external systems, perform computations, and take actions in the real world.

Tools are designed to be **model-controlled**, meaning that tools are exposed from servers to clients with the intention of the AI model being able to automatically invoke them (with a human in the loop to grant approval).Tools in MCP allow servers to expose executable functions that can be invoked by clients and used by LLMs to perform actions. Key aspects of tools include:

- Discovery: Clients can list available tools through the

tools/listendpoint - Invocation: Tools are called using the

tools/callendpoint, where servers perform the requested operation and return results - Flexibility: Tools can range from simple calculations to complex API interactions

Like resources, tools are identified by unique names and can include descriptions to guide their usage. However, unlike resources, tools represent dynamic operations that can modify state or interact with external systems.

Each tool is defined with the following structure:

{

name: string; // Unique identifier for the tool

description?: string; // Human-readable description

inputSchema: { // JSON Schema for the tool's parameters

type: "object",

properties: { ... } // Tool-specific parameters

},

annotations?: { // Optional hints about tool behavior

title?: string; // Human-readable title for the tool

readOnlyHint?: boolean; // If true, the tool does not modify its environment

destructiveHint?: boolean; // If true, the tool may perform destructive updates

idempotentHint?: boolean; // If true, repeated calls with same args have no additional effect

openWorldHint?: boolean; // If true, tool interacts with external entities

}

}Here's an example of implementing a basic tool in an MCP server:

```typescript const server = new Server({ name: "example-server", version: "1.0.0" }, { capabilities: { tools: {} } }); // Define available tools

server.setRequestHandler(ListToolsRequestSchema, async () => {

return {

tools: [{

name: "calculate_sum",

description: "Add two numbers together",

inputSchema: {

type: "object",

properties: {

a: { type: "number" },

b: { type: "number" }

},

required: ["a", "b"]

}

}]

};

});

// Handle tool execution

server.setRequestHandler(CallToolRequestSchema, async (request) => {

if (request.params.name === "calculate_sum") {

const { a, b } = request.params.arguments;

return {

content: [

{

type: "text",

text: String(a + b)

}

]

};

}

throw new Error("Tool not found");

});

```

</Tab>

<Tab title="Python">

```python

app = Server("example-server")

@app.list_tools()

async def list_tools() -> list[types.Tool]:

return [

types.Tool(

name="calculate_sum",

description="Add two numbers together",

inputSchema={

"type": "object",

"properties": {

"a": {"type": "number"},

"b": {"type": "number"}

},

"required": ["a", "b"]

}

)

]

@app.call_tool()

async def call_tool(

name: str,

arguments: dict

) -> list[types.TextContent | types.ImageContent | types.EmbeddedResource]:

if name == "calculate_sum":

a = arguments["a"]

b = arguments["b"]

result = a + b

return [types.TextContent(type="text", text=str(result))]

raise ValueError(f"Tool not found: {name}")

```

</Tab>

Here are some examples of types of tools that a server could provide:

Tools that interact with the local system:

{

name: "execute_command",

description: "Run a shell command",

inputSchema: {

type: "object",

properties: {

command: { type: "string" },

args: { type: "array", items: { type: "string" } }

}

}

}Tools that wrap external APIs:

{

name: "github_create_issue",

description: "Create a GitHub issue",

inputSchema: {

type: "object",

properties: {

title: { type: "string" },

body: { type: "string" },

labels: { type: "array", items: { type: "string" } }

}

}

}Tools that transform or analyze data:

{

name: "analyze_csv",

description: "Analyze a CSV file",

inputSchema: {

type: "object",

properties: {

filepath: { type: "string" },

operations: {

type: "array",

items: {

enum: ["sum", "average", "count"]

}

}

}

}

}When implementing tools:

- Provide clear, descriptive names and descriptions

- Use detailed JSON Schema definitions for parameters

- Include examples in tool descriptions to demonstrate how the model should use them

- Implement proper error handling and validation

- Use progress reporting for long operations

- Keep tool operations focused and atomic

- Document expected return value structures

- Implement proper timeouts

- Consider rate limiting for resource-intensive operations

- Log tool usage for debugging and monitoring

When exposing tools:

- Validate all parameters against the schema

- Sanitize file paths and system commands

- Validate URLs and external identifiers

- Check parameter sizes and ranges

- Prevent command injection

- Implement authentication where needed

- Use appropriate authorization checks

- Audit tool usage

- Rate limit requests

- Monitor for abuse

- Don't expose internal errors to clients

- Log security-relevant errors

- Handle timeouts appropriately

- Clean up resources after errors

- Validate return values

MCP supports dynamic tool discovery:

- Clients can list available tools at any time

- Servers can notify clients when tools change using

notifications/tools/list_changed - Tools can be added or removed during runtime

- Tool definitions can be updated (though this should be done carefully)

Tool errors should be reported within the result object, not as MCP protocol-level errors. This allows the LLM to see and potentially handle the error. When a tool encounters an error:

- Set

isErrortotruein the result - Include error details in the

contentarray

Here's an example of proper error handling for tools:

```typescript try { // Tool operation const result = performOperation(); return { content: [ { type: "text", text: `Operation successful: ${result}` } ] }; } catch (error) { return { isError: true, content: [ { type: "text", text: `Error: ${error.message}` } ] }; } ```<Tab title="Python">

```python

try:

# Tool operation

result = perform_operation()

return types.CallToolResult(

content=[

types.TextContent(

type="text",

text=f"Operation successful: {result}"

)

]

)

except Exception as error:

return types.CallToolResult(

isError=True,

content=[

types.TextContent(

type="text",

text=f"Error: {str(error)}"

)

]

)

```

</Tab>

This approach allows the LLM to see that an error occurred and potentially take corrective action or request human intervention.

Tool annotations provide additional metadata about a tool's behavior, helping clients understand how to present and manage tools. These annotations are hints that describe the nature and impact of a tool, but should not be relied upon for security decisions.

Tool annotations serve several key purposes:

- Provide UX-specific information without affecting model context

- Help clients categorize and present tools appropriately

- Convey information about a tool's potential side effects

- Assist in developing intuitive interfaces for tool approval

The MCP specification defines the following annotations for tools:

| Annotation | Type | Default | Description

|

| ----------------- | ------- | ------- |

------------------------------------------------------------------------------------------------------------------------------------ |

| title | string | - | A human-readable title for the tool, useful for UI display

|

| readOnlyHint | boolean | false | If true, indicates the tool does not modify its environment

|

| destructiveHint | boolean | true | If true, the tool may perform destructive updates (only meaningful when readOnlyHint is false)

|

| idempotentHint | boolean | false | If true, calling the tool repeatedly with the same arguments has no additional effect (only meaningful when

readOnlyHint is false) |

| openWorldHint | boolean | true | If true, the tool may interact with an "open world" of external entities

|

Here's how to define tools with annotations for different scenarios:

// A read-only search tool

{

name: "web_search",

description: "Search the web for information",

inputSchema: {

type: "object",

properties: {

query: { type: "string" }

},

required: ["query"]

},

annotations: {

title: "Web Search",

readOnlyHint: true,

openWorldHint: true

}

}

// A destructive file deletion tool

{

name: "delete_file",

description: "Delete a file from the filesystem",

inputSchema: {

type: "object",

properties: {

path: { type: "string" }

},

required: ["path"]

},

annotations: {

title: "Delete File",

readOnlyHint: false,

destructiveHint: true,

idempotentHint: true,

openWorldHint: false

}

}

// A non-destructive database record creation tool

{

name: "create_record",

description: "Create a new record in the database",

inputSchema: {

type: "object",

properties: {

table: { type: "string" },

data: { type: "object" }

},

required: ["table", "data"]

},

annotations: {

title: "Create Database Record",

readOnlyHint: false,

destructiveHint: false,

idempotentHint: false,

openWorldHint: false

}

}<Tab title="Python">

```python

from mcp.server.fastmcp import FastMCP

mcp = FastMCP("example-server")

@mcp.tool(

annotations={

"title": "Calculate Sum",

"readOnlyHint": True,

"openWorldHint": False

}

)

async def calculate_sum(a: float, b: float) -> str:

"""Add two numbers together.

Args:

a: First number to add

b: Second number to add

"""

result = a + b

return str(result)

```

</Tab>

-

Be accurate about side effects: Clearly indicate whether a tool modifies its environment and whether those modifications are destructive.

-

Use descriptive titles: Provide human-friendly titles that clearly describe the tool's purpose.

-

Indicate idempotency properly: Mark tools as idempotent only if repeated calls with the same arguments truly have no additional effect.

-

Set appropriate open/closed world hints: Indicate whether a tool interacts with a closed system (like a database) or an open system (like the web).

-

Remember annotations are hints: All properties in ToolAnnotations are hints and not guaranteed to provide a faithful description of tool behavior. Clients should never make security-critical decisions based solely on annotations.

A comprehensive testing strategy for MCP tools should cover:

- Functional testing: Verify tools execute correctly with valid inputs and handle invalid inputs appropriately

- Integration testing: Test tool interaction with external systems using both real and mocked dependencies

- Security testing: Validate authentication, authorization, input sanitization, and rate limiting

- Performance testing: Check behavior under load, timeout handling, and resource cleanup

- Error handling: Ensure tools properly report errors through the MCP protocol and clean up resources

Source: https://modelcontextprotocol.io/docs/concepts/transports

Learn about MCP's communication mechanisms

Transports in the Model Context Protocol (MCP) provide the foundation for communication between clients and servers. A transport handles the underlying mechanics of how messages are sent and received.

MCP uses JSON-RPC 2.0 as its wire format. The transport layer is responsible for converting MCP protocol messages into JSON-RPC format for transmission and converting received JSON-RPC messages back into MCP protocol messages.

There are three types of JSON-RPC messages used:

{

jsonrpc: "2.0",

id: number | string,

method: string,

params?: object

}{

jsonrpc: "2.0",

id: number | string,

result?: object,

error?: {

code: number,

message: string,

data?: unknown

}

}{

jsonrpc: "2.0",

method: string,

params?: object

}MCP includes two standard transport implementations:

The stdio transport enables communication through standard input and output streams. This is particularly useful for local integrations and command-line tools.

Use stdio when:

- Building command-line tools

- Implementing local integrations

- Needing simple process communication

- Working with shell scripts

const transport = new StdioServerTransport();

await server.connect(transport);

```

</Tab>

<Tab title="TypeScript (Client)">

```typescript

const client = new Client({

name: "example-client",

version: "1.0.0"

}, {

capabilities: {}

});

const transport = new StdioClientTransport({

command: "./server",

args: ["--option", "value"]

});

await client.connect(transport);

```

</Tab>

<Tab title="Python (Server)">

```python

app = Server("example-server")

async with stdio_server() as streams:

await app.run(

streams[0],

streams[1],

app.create_initialization_options()

)

```

</Tab>

<Tab title="Python (Client)">

```python

params = StdioServerParameters(

command="./server",

args=["--option", "value"]

)

async with stdio_client(params) as streams:

async with ClientSession(streams[0], streams[1]) as session:

await session.initialize()

```

</Tab>

SSE transport enables server-to-client streaming with HTTP POST requests for client-to-server communication.

Use SSE when:

- Only server-to-client streaming is needed

- Working with restricted networks

- Implementing simple updates

SSE transports can be vulnerable to DNS rebinding attacks if not properly secured. To prevent this:

- Always validate Origin headers on incoming SSE connections to ensure they come from expected sources

- Avoid binding servers to all network interfaces (0.0.0.0) when running locally - bind only to localhost (127.0.0.1) instead

- Implement proper authentication for all SSE connections

Without these protections, attackers could use DNS rebinding to interact with local MCP servers from remote websites.

```typescript import express from "express"; const app = express();

const server = new Server({

name: "example-server",

version: "1.0.0"

}, {

capabilities: {}

});

let transport: SSEServerTransport | null = null;

app.get("/sse", (req, res) => {

transport = new SSEServerTransport("/messages", res);

server.connect(transport);

});

app.post("/messages", (req, res) => {

if (transport) {

transport.handlePostMessage(req, res);

}

});

app.listen(3000);

```

</Tab>

<Tab title="TypeScript (Client)">

```typescript

const client = new Client({

name: "example-client",

version: "1.0.0"

}, {

capabilities: {}

});

const transport = new SSEClientTransport(

new URL("http://localhost:3000/sse")

);

await client.connect(transport);

```

</Tab>

<Tab title="Python (Server)">

```python

from mcp.server.sse import SseServerTransport

from starlette.applications import Starlette

from starlette.routing import Route

app = Server("example-server")

sse = SseServerTransport("/messages")

async def handle_sse(scope, receive, send):

async with sse.connect_sse(scope, receive, send) as streams:

await app.run(streams[0], streams[1], app.create_initialization_options())

async def handle_messages(scope, receive, send):

await sse.handle_post_message(scope, receive, send)

starlette_app = Starlette(

routes=[

Route("/sse", endpoint=handle_sse),

Route("/messages", endpoint=handle_messages, methods=["POST"]),

]

)

```

</Tab>

<Tab title="Python (Client)">

```python

async with sse_client("http://localhost:8000/sse") as streams:

async with ClientSession(streams[0], streams[1]) as session:

await session.initialize()

```

</Tab>

MCP makes it easy to implement custom transports for specific needs. Any transport implementation just needs to conform to the Transport interface:

You can implement custom transports for:

- Custom network protocols

- Specialized communication channels

- Integration with existing systems

- Performance optimization

// Send a JSON-RPC message

send(message: JSONRPCMessage): Promise<void>;

// Close the connection

close(): Promise<void>;

// Callbacks

onclose?: () => void;

onerror?: (error: Error) => void;

onmessage?: (message: JSONRPCMessage) => void;

}

```

</Tab>

<Tab title="Python">

Note that while MCP Servers are often implemented with asyncio, we recommend

implementing low-level interfaces like transports with `anyio` for wider compatibility.

```python

@contextmanager

async def create_transport(

read_stream: MemoryObjectReceiveStream[JSONRPCMessage | Exception],

write_stream: MemoryObjectSendStream[JSONRPCMessage]

):

"""

Transport interface for MCP.

Args:

read_stream: Stream to read incoming messages from

write_stream: Stream to write outgoing messages to

"""

async with anyio.create_task_group() as tg:

try:

# Start processing messages

tg.start_soon(lambda: process_messages(read_stream))

# Send messages

async with write_stream:

yield write_stream

except Exception as exc:

# Handle errors

raise exc

finally:

# Clean up

tg.cancel_scope.cancel()

await write_stream.aclose()

await read_stream.aclose()

```

</Tab>

Transport implementations should handle various error scenarios:

- Connection errors

- Message parsing errors

- Protocol errors

- Network timeouts

- Resource cleanup

Example error handling:

```typescript class ExampleTransport implements Transport { async start() { try { // Connection logic } catch (error) { this.onerror?.(new Error(`Failed to connect: ${error}`)); throw error; } } async send(message: JSONRPCMessage) {

try {

// Sending logic

} catch (error) {

this.onerror?.(new Error(`Failed to send message: ${error}`));

throw error;

}

}

}

```

</Tab>

<Tab title="Python">

Note that while MCP Servers are often implemented with asyncio, we recommend

implementing low-level interfaces like transports with `anyio` for wider compatibility.

```python

@contextmanager

async def example_transport(scope: Scope, receive: Receive, send: Send):

try:

# Create streams for bidirectional communication

read_stream_writer, read_stream = anyio.create_memory_object_stream(0)

write_stream, write_stream_reader = anyio.create_memory_object_stream(0)

async def message_handler():

try:

async with read_stream_writer:

# Message handling logic

pass

except Exception as exc:

logger.error(f"Failed to handle message: {exc}")

raise exc

async with anyio.create_task_group() as tg:

tg.start_soon(message_handler)

try:

# Yield streams for communication

yield read_stream, write_stream

except Exception as exc:

logger.error(f"Transport error: {exc}")

raise exc

finally:

tg.cancel_scope.cancel()

await write_stream.aclose()

await read_stream.aclose()

except Exception as exc:

logger.error(f"Failed to initialize transport: {exc}")

raise exc

```

</Tab>

When implementing or using MCP transport:

- Handle connection lifecycle properly

- Implement proper error handling

- Clean up resources on connection close

- Use appropriate timeouts

- Validate messages before sending

- Log transport events for debugging

- Implement reconnection logic when appropriate

- Handle backpressure in message queues

- Monitor connection health

- Implement proper security measures

When implementing transport:

- Implement proper authentication mechanisms

- Validate client credentials

- Use secure token handling

- Implement authorization checks

- Use TLS for network transport

- Encrypt sensitive data

- Validate message integrity

- Implement message size limits

- Sanitize input data

- Implement rate limiting

- Use appropriate timeouts

- Handle denial of service scenarios

- Monitor for unusual patterns

- Implement proper firewall rules

- For SSE transports, validate Origin headers to prevent DNS rebinding attacks

- For local SSE servers, bind only to localhost (127.0.0.1) instead of all interfaces (0.0.0.0)

Tips for debugging transport issues:

- Enable debug logging

- Monitor message flow

- Check connection states

- Validate message formats