The goal of this setup is to overload a single RGW so that adding another one would increase the throughput without overloaifng the OSDs.

- machine with multiple nvme drives and enough CPU/RAM to run both Ceph and the clients. e.g.

$ lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

sda 8:0 0 893.8G 0 disk

└─sda1 8:1 0 893.8G 0 part /

nvme1n1 259:0 0 1.5T 0 disk

nvme4n1 259:1 0 1.5T 0 disk

nvme7n1 259:2 0 1.5T 0 disk

nvme3n1 259:3 0 1.5T 0 disk

nvme5n1 259:4 0 1.5T 0 disk

nvme6n1 259:5 0 1.5T 0 disk

nvme2n1 259:6 0 1.5T 0 disk

nvme0n1 259:7 0 1.5T 0 disk

└─nvme0n1p1 259:8 0 1.5T 0 part

- run vstart where RGW is doing compression and OSDs use the nvme drives. e.g.

sudo MON=1 OSD=2 MDS=0 MGR=0 RGW=2 ../src/vstart.sh -n --bluestore-devs "/dev/nvme7n1,/dev/nvme6n1" --rgw_compression zlib --bluestore -o "bluestore_block_size=1500000000000" -o "rgw_dynamic_resharding=false"

Payload is using multiple s3cmd clients to upload 1GB files (using multipart upload) in parallel.

The attached script upload.sh could be used to upload 800 object to one RGW to or to upload them across 2 RGWs.

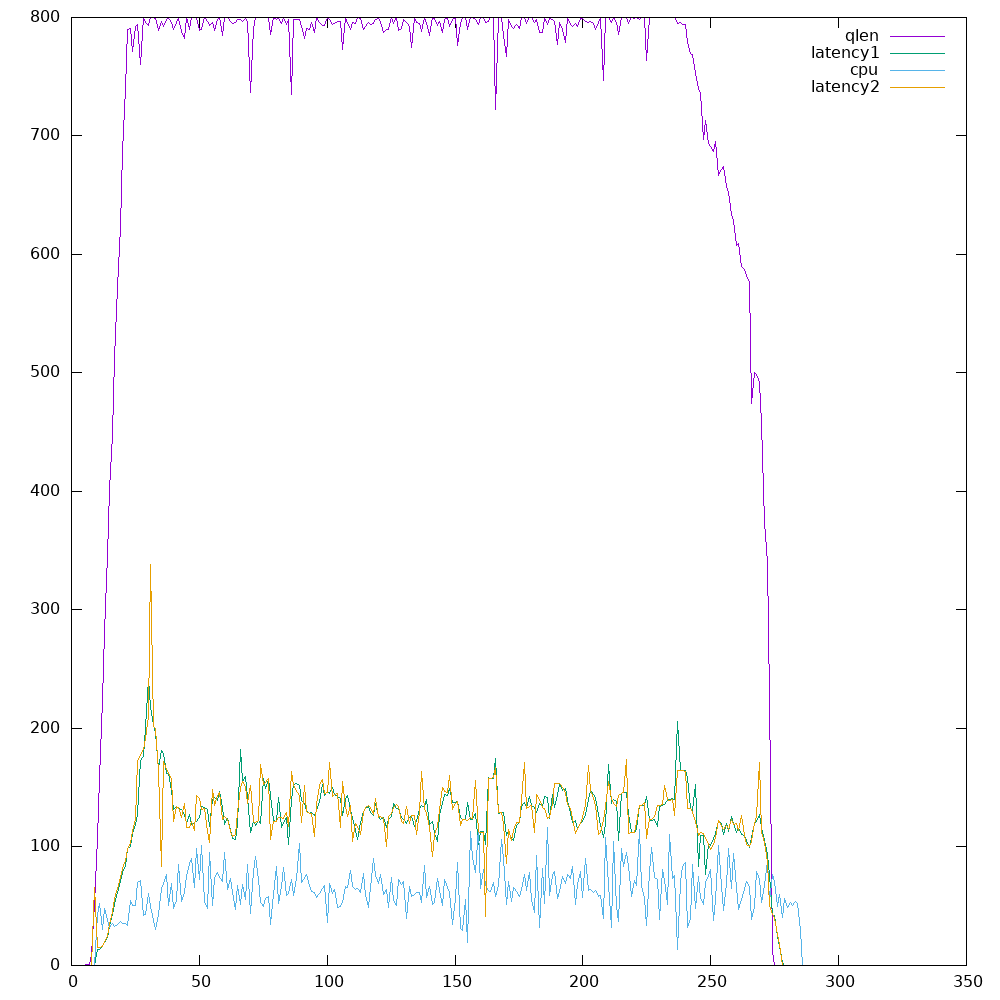

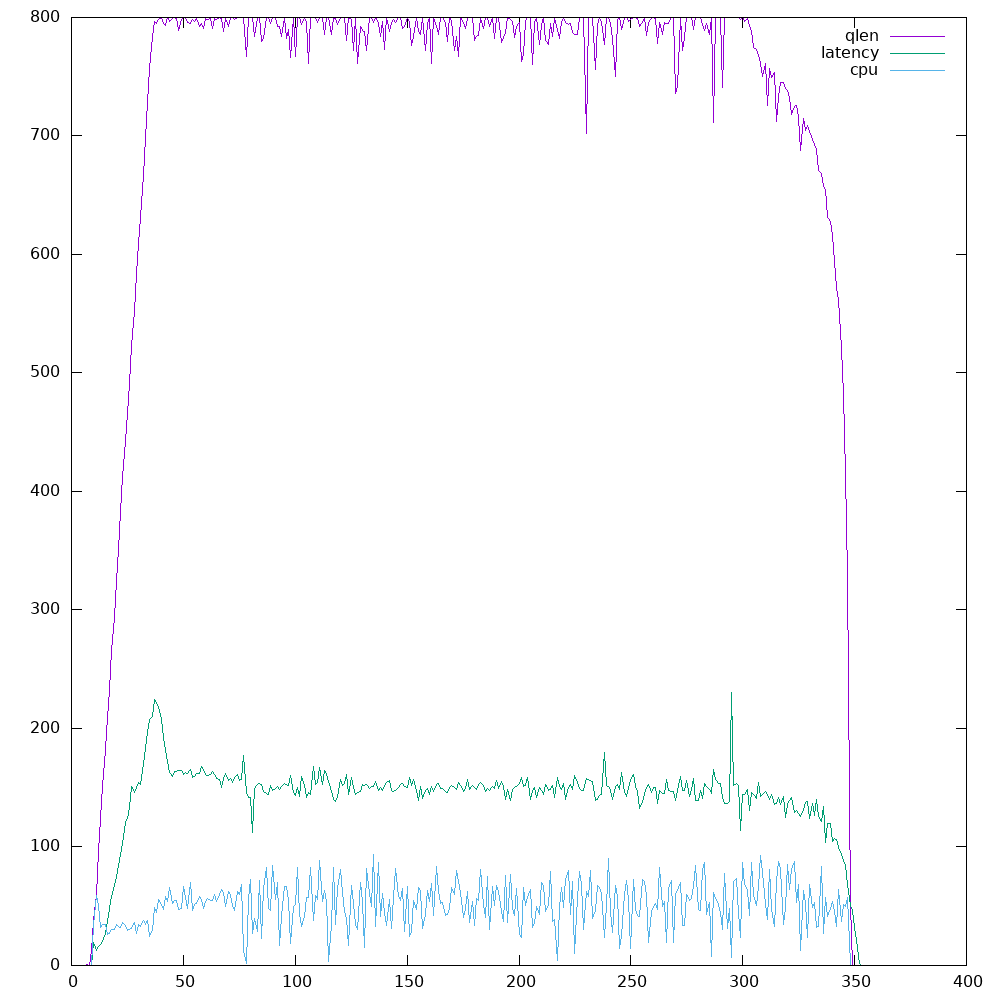

Following metrics could be used to estimate when using another RGW would increase the overall throughput of the system:

- queue length: estimating how much pending work the RGW has

sudo ./bin/ceph --admin-daemon out/radosgw.8000.asok perf dump 2>/dev/null | jq .rgw.qlen

sudo ./bin/ceph --admin-daemon out/radosgw.8001.asok perf dump 2>/dev/null | jq .rgw.qlen

- object put latency:

sudo ./bin/ceph --admin-daemon out/radosgw.8000.asok perf dump 2>/dev/null | jq .rgw.put_initial_lat.avgtime

sudo ./bin/ceph --admin-daemon out/radosgw.8001.asok perf dump 2>/dev/null | jq .rgw.put_initial_lat.avgtime

- Setting the threshold at 80% of queue capacity would be useful given that the maximum queue capacity is set correctly. In some cases, increasing the queue capacity would increase the throughout, while in other cases adding another RGW would be more useful.

- The put latency is currently not using a sliding window. See this issue. To woraround that, the

metrics.pyis calculating a 5 seconds sliding window latency. - The put latency is mainly impacted by the object size and the OSD latency. Need to add "RGW only" latency